Research Platform

Enterprise-grade astronomical computing on a seven-node Proxmox cluster

Platform Overview

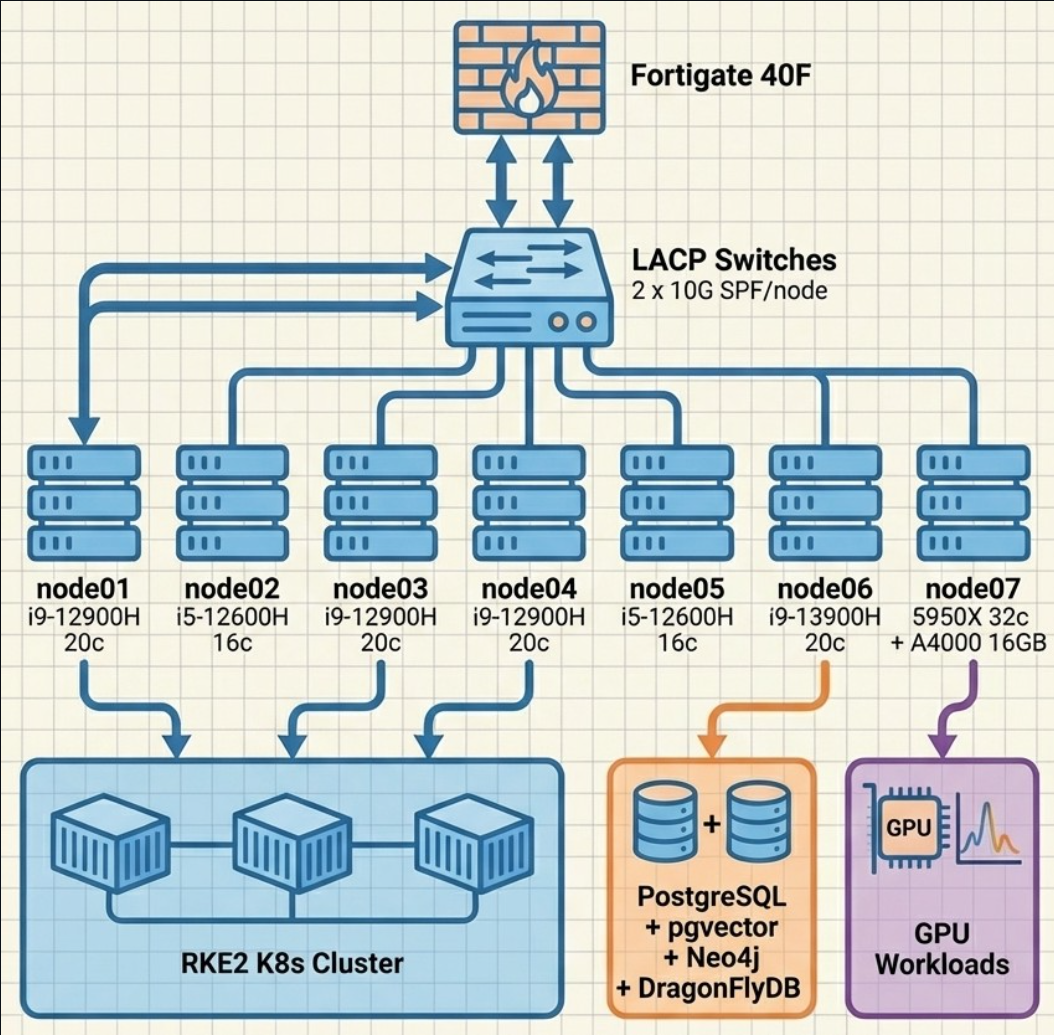

The Proxmox Astronomy Lab is a production-scale computing platform built on a seven-node Proxmox VE cluster with hybrid RKE2 Kubernetes and strategic VM architecture. We're demonstrating that sophisticated astronomical computing doesn't require institutional resources, just deliberate engineering and open-science principles.

Cluster Specifications

| Nodes | 7 |

| Total Cores | 144 |

| Total RAM | 704 GB |

| Total NVMe | 26 TB |

| Network Fabric | 10G LACP per node |

| GPU | RTX A4000 16GB |

Node Inventory

Node details (7 nodes)

| Node | CPU | Cores | RAM | Role |

|---|---|---|---|---|

| node01 | i9-12900H | 20 | 96 GB | Compute (K8s) |

| node02 | i5-12600H | 16 | 96 GB | Light compute + storage |

| node03 | i9-12900H | 20 | 96 GB | Compute (K8s) |

| node04 | i9-12900H | 20 | 96 GB | Compute (K8s) |

| node05 | i5-12600H | 16 | 96 GB | Light compute + storage |

| node06 | i9-13900H | 20 | 96 GB | Heavy compute (databases) |

| node07 | AMD 5950X | 32 | 128 GB | GPU compute |

Data Science VM Inventory

VM details (9 VMs)

| VM | vCPU | RAM | Purpose |

|---|---|---|---|

| radio-k8s01 | 12 | 48G | Kubernetes primary |

| radio-k8s02 | 12 | 48G | Kubernetes worker |

| radio-k8s03 | 12 | 48G | Kubernetes worker |

| radio-gpu01 | 12 | 48G | GPU worker (A4000) |

| radio-pgsql01 | 8 | 32G | Research PostgreSQL |

| radio-pgsql02 | 4 | 16G | Application PostgreSQL |

| radio-neo4j01 | 6 | 24G | Graph database |

| radio-fs02 | 4 | 6G | SMB file server |

| radio-agents01 | 8 | 32G | AI agents, monitoring |

Architecture Highlights

Hybrid K8s + VM

RKE2 for dynamic ML workloads, strategic VMs for databases and services that don't benefit from orchestration

PostgreSQL as materialization engine

Catalog joins and derived computations happen in PostgreSQL, results export to Parquet for distribution

GPU compute

RTX A4000 (16 GB VRAM) for ML training and inference workloads

10G networking

2x10G LACP per node for high-bandwidth data movement between compute and storage

Data Pipeline

PostgreSQL serves as the materialization engine where VAC joins and derived computations occur. Final ARD products are exported to Parquet for distribution and analysis. The pipeline manages approximately 32 GB of catalog data in PostgreSQL and 108 GB of spectral tiles in Parquet format.

Governance and Operational Practices

Operationally, the cluster is managed under the same discipline the org applies to its research: documented, reproducible, and publicly reviewable. Specifics:

- Security baseline — working toward CIS Controls v8.1 Implementation Group 1 alignment, with documentation rewrites in progress

- AI governance — model cards track intended use, data sources, evaluation results, and known limitations for the AI systems used across the org. NIST AI RMF alignment is tracked against the CIS-RAM method

- Published templates — governance templates are maintained in the NIST AI RMF Cookbook for other small research orgs to adapt

- Automation — Ansible-managed configuration, version-controlled infrastructure, passwordless-sudo fleet operations via a dedicated

ansible01identity

None of this is certification; it's operational practice applied voluntarily at volunteer-organization scale.